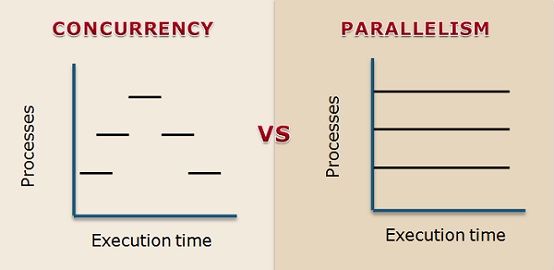

Concurrency and parallelism are related terms but not the same, and often misconceived as the similar terms. The crucial difference between concurrency and parallelism is that concurrency is about dealing with a lot of things at same time (gives the illusion of simultaneity) or handling concurrent events essentially hiding latency. On the contrary, parallelism is about doing a lot of things at the same time for increasing the speed.

Concurrency and parallelism are related terms but not the same, and often misconceived as the similar terms. The crucial difference between concurrency and parallelism is that concurrency is about dealing with a lot of things at same time (gives the illusion of simultaneity) or handling concurrent events essentially hiding latency. On the contrary, parallelism is about doing a lot of things at the same time for increasing the speed.

Parallelly executing processes must be concurrent unless they are operated at the same instant but concurrently executing processes could never be parallel because these are not processed at the same instant.

Content: Concurrency Vs Parallelism

Comparison Chart

| Basis for comparison | Concurrency | Parallelism |

|---|---|---|

| Basic | It is the act of managing and running multiple computations at the same time. | It is the act of running multiple computations simultaneously. |

| Achieved through | Interleaving Operation | Using multiple CPU's |

| Benefits | Increased amount of work accomplished at a time. | Improved throughput, computational speed-up |

| Make use of | Context switching | Multiple CPUs for operating multiple processes. |

| Processing units required | Probably single | Multiple |

| Example | Running multiple applications at the same time. | Running web crawler on a cluster. |

Definition of Concurrency

Concurrency is a technique utilized for decreasing the response time of the system using single processing unit or sequential processing. A task is divided into multiple parts, and its part is processed simultaneously but not at the same instant. It produces the illusion of parallelism, but in actual the chunks of a task are not parallelly processed. Concurrency is obtained by interleaving operation of processes on the CPU, in other words through context switching where the control is swiftly switched between different threads of processes and the switching is unrecognizable. That is the reason it looks like parallel processing.

Concurrency imparts multi-party access to the shared resources and requires some form of communication. It works on a thread when it is making any useful progress then it halts the thread and switches to different thread unless it is making any useful progress.

Definition of Parallelism

Parallelism is devised for the purpose of increasing the computational speed by using multiple processors. It is a technique of simultaneously executing the different tasks at the same instant. It involves several independent computing processing units or computing devices which are parallelly operating and performing tasks in order to increase computational speed-up and improve throughput.

Parallelism results in overlapping of CPU and I/O activities in one process with the CPU and I/O activities of another process. Whereas when concurrency is implemented, the speed is increased by overlapping I/O activities of one process with CPU process of another process.

Key Differences Between Concurrency and Parallelism

- Concurrency is the act of running and managing multiple tasks at the same time. On the other hand, parallelism is the act of running various tasks simultaneously.

- Parallelism is obtained by using multiple CPUs, like a multi-processor system and operating different processes on these processing units or CPUs. In contrast, concurrency is achieved by interleaving operation of processes on the CPU and particularly context switching.

- Concurrency can be implemented by using single processing unit while this can not be possible in case of parallelism, it requires multiple processing units.

Conclusion

In summary, the concurrency and parallelism are not exactly similar and can be distinguished. Concurrency could involve the different tasks running and having overlapping time. On the other hand, parallelism involves different tasks running simultaneously and tend to have the same starting and ending time.

iamaprogrammer says

liked your content especially the picture in the top.

it explains well

Holly Hooper says

Appreciating the time and effort you put into your blog and in depth information you provide. It’s awesome to come across a blog every once in a while that isn’t the same old rehashed information. Fantastic read! I’ve bookmarked your site and I’m adding your RSS feeds to my Google account.